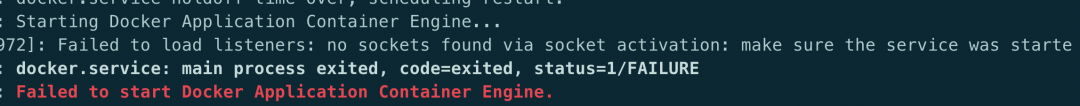

Thank you for sharing this results, this is exactly what I was looking for as I am experiencing the same issue. The container resource requests really matter, if they are too different from the expected/observed real usage we could end up with a substantial underutilization of the infrastructure capacity… It will be important to keep that in mind, for example when defining custom resource requests for the CAS and Compute Servers. The implication of this interesting troubleshooting adventure is that it reminds us that Kubernetes is really a "reservation system". So please keep in mind that the Kubernetes scheduler does not make its decisions based on the actual real-time CPU or Memory utilization. Just like good old YARN, Kubernetes is simply keeping the count of all the containers resource requests for each node and make his decisions to allow or reject new pods based on that. So, even if my nodes CPUs were clearly not busy, Kubernetes was right ! the message "4, insufficient CPU" now makes sense (in terms of resource allocation). So lesson learned for me : 40 cores is clearly not enough to deploy 2 Viya environments with a SAS Visual Machine Learning order. So as soon as I deleted the first namespace (removing the first Viya deployment), then the sas-crunchy-postgres pod magically changed its status from PENDING to RUNNING to READY.

We can also notice that much more CPU is requested than actually used (it could be if course different as soon as some users are starting to use the Viya products). The total of all the CPU requests barely fit in the "allocatable" cluster capacity. If you are using Lens and have deployed the monitoring tool, you should also be able to see that there is a general "under sizing" issue with the sum of requested CPU almost corresponding to the number of available cores in the cluster (Allocatable capacity). #THE NODE WAS LOW ON RESOURCE EPHEMERAL STORAGE FULL#So intnode04 is tainted to only accept CAS pods and the 4 other nodes (intnode01,02,03 and 05) are full in terms of cpu requests…it explains why my sas-crunchy-postgres pod can not be immediately scheduled by Kubernetes and stays stuck in a PENDING state. Their value are near or equal to 100% for all the nodes that could accept the new pod.

Look at the CPU Requests on the nodes (the command to get the nodes allocated resources is simply kubectl describe node ). Well.The answer appears in the "cpu" aggregated requests reported in the nodes "Allocated Resources" table…when I saw it, I finally understood what my issue was. So why does Kubernetes keep my sas-crunchy-data-postgres pod remain pending forever and complains about insufficient CPU ? So, I checked the sas-crunchy-postgres pod container’s resource requests.īut again it does not seem to be the issue.the pod's individual containers request are pretty low and the total amount of all containers CPU requests in the pod is less than 0.2 core. I thought "OK, maybe the sas-crunchy pod wants a lot of CPU ?" There seems to be sufficient room for more CPU utilization, wouldn't you agree ? However, when I looked at the CPU usage (in Lens or running the kubectl top nodes command), none of my Kubernetes nodes seemed particularly busy on their CPU… In the crunchy pod event log, there was also a message talking about "insufficient CPU"

(first one from a Custom Resource file with the "user-content" stored in an external Gitlab, then one with an "inline" CR).īut this morning I noticed that the second environment was not up yet (although it has the whole night to complete).Īfter some investigation, it turned out that the culprit was the sas-crunchy-postgres pod who remained in a pending state (even after several restart attempts ) Yesterday, I was playing with the Deployment Operator deployments for stable 2021.2.1 and I started the deployment of 2 environments/namespaces in my cluster. So, I thought I’ll write a very short blog (for once ) to share ! You might already be aware or have seen that in your Viya environment, but I think it is key to understand how Kubernetes is managing the resources requests. I’ve experienced something very interesting this morning with a Viya 4 deployment (it was something that I knew but that I never experienced in such a visible manner).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed